LLMs as Operating Systems

People familiar with ChatGPT, GPT-3, and advancements in machine learning, Generative AI like Midjourney, DALL-E, or those who've used AI features on Snapchat, Character AI, Loom, GPTInbox, or Duolingo's personalized tutors.

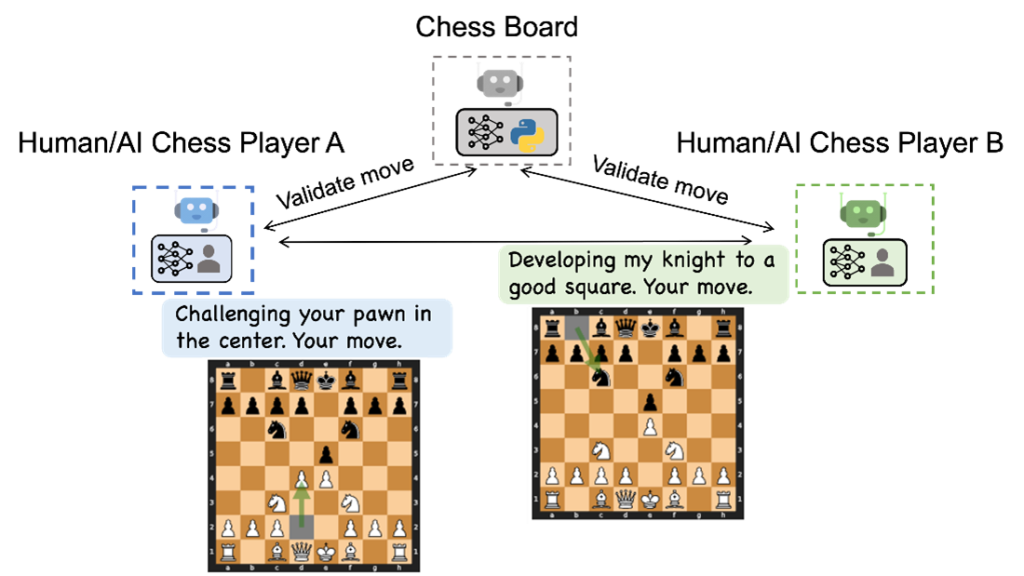

Last month the Microsoft Research team shared some insights in a generative application development model called AutoGen that casts a spotlight on so many intriguing possibilities. Large Language Models (LLMs) like GPT-4 and Mixture of Experts (MoEs) are stepping beyond simple tasks, showcasing a potential to power complex systems. This begs the question, Can LLMs act as orchestrators for all human’s digital interaction.

The Foundations

To explore this possibility, let’s take a look at foundations of an operating system. “The Kernel” — Traditionally, a kernel is the cornerstone of an operating system, the kernel acts as the mediator between software with hardware. Therefore we can propose that LLMs are emerging as a segment of Kernels we could label “Soft Kernels”, however this time they act as mediators between humans and the digital world.

In our world of computing as we know of it today apps are built on top of operating system kernels. From your browser; The Mozilla Firefox, Google Chrome, Apple Safari or Microsoft Edge to your favorite Music app, every interaction you enjoy on any digital device is made possible because of the Kernel’s ability to translate software interactions into Hardware outcomes and output.

Such that when you tap the PLAY ▶ Button on Spotify, the sound of music is streamed out into the airwaves from the speakers of your iPhone.

Beyond ChatGPT — A look at LLMs, IoT, and AGI

ChatGPT has been amazing, since it’s release we haven’t stopped talking about it, but ChatGPT is just a chatbot and like every other chat implementation of GPT-4 and Generative AI, we’ve merely scratched the surface of LLMs’ capabilities, somewhat narrowing their perceived utility. However, when looking at the AutoGen framework from the Microsoft Team, you see the unfolding of LLMs’ prowess in a myriad of applications, transcending chat functionalities.

Looking ahead towards Artificial General Intelligence (AGI) and the Internet of Things (IoT), LLMs are poised to become the operational hub of a sprawling ecosystem of devices and services, with specialized LLMs serving as individual applications on this grand platform.

We are waiting for Robotics to take this full circle, because the moment IoT goes mainstream, we might be able to facilitate multi-agent conversations that evolve into the operational backbone of operations across several industries; from Healthcare, Economics, Governance and Personal Assistance. A network of LLMs orchestrating dynamic dialogue could become the standard for Human Computer Interaction (HCI)

In healthcare, we could use Operating LLMs to handle patient intake, guide diagnostic procedures, automate inventory management, such that interaction between medical practitioners and patients can be enhanced with smarter medical devices powered by Generative AI. A general term for this is H.I.T.L (Human in the Loop)

A blueprint for you

Here is a sample blueprint I would consider, when referencing to the potential for LLMs as an operating system.

Imagine your city has IoT sensors spread across the roads, such that these sensors can monitor and manage traffic flow, optimize public transportation schedule and even assist in emergency responses.

When a major traffic incident occurs, Every traffic light is provided with instructions on how to manage routing, emergency services are automatically notified, real-time updates beyond the capabilities of Google Maps are sent to citizens, ensuring safety and minimizing chaos or congestion in certain areas.

This can all happen though dialogue, with every sensor reporting to it’s central LLMs, while the LLMs interfaces with every human based on their needs and objectives. So your route from your home to the office could easily be optimized to ensure that you never arrive late for work. All of this powered through a single conversation interface such that it’s natural and yet super efficient.

Emerging Paradigms in Generative AI

Andrej Karpathy shared a tweet that sheds more light on LLMs evolving into the kernel processes of a new Operating System, orchestrating a multifaceted digital interaction.

Also looking at the recent unveiling of Mistral’s 7B LLM. We can already see a shift in the engineering architectures for Large Language Models. Mistral boasts that unlike the monolithic Transformer architectures of GPT-3 or LLAMa, Mistral employs a blend of architectural enhancements like: Grouped-Query attention, sliding-window attention, and Byte-fallback BPE tokenizer. a.k.a a more distributed architecture for LLMs that could improve the efficiency of their operation at scale.

In conclusion, we can contemplate that LLMs as potential operating systems is backed by real-time tech advancements in various AI framework research, new hybrid architectures like Mistral which could potentially redefine the future of Human Computer Interactions.

3 References

AutoGen: Enabling Next-Generation Large Language Model Applications

Microsoft Research Blog on the AutoGen framework for multi-agent LLM applications.

Andrej Karpathy's Tweet

On the evolution of LLMs and the emerging computing paradigm.

Mistral 7B LLM Announcement

Hybrid architecture leveraging innovative attention mechanisms and tokenization.

Continue Reading

I miss when we used to ask stupid questions

Your team still has questions. They just ask a machine now. And that is a problem you cannot manage your way out of.

We are all thinking with the same brain

If you asked AI to validate your idea and so did your cofounder, your board, and your team, you did not get four opin...

Your AI sessions could be your next digital product

The most valuable thing you produce with AI is not the output. It is the conversation that delivers that output, what...